Nvidia has announced plans to build AI supercomputers packed with hundreds of thousands of GPUs right in the United States over the next few years.

On April 15, former U.S. President Donald Trump shared on social media that Nvidia will manufacture these AI supercomputers domestically. The move comes as Nvidia looks to strengthen its U.S. operations, amid tightened restrictions on tech exports to China that have heavily impacted its business.

What Exactly Is a Supercomputer?

Supercomputers are systems engineered to perform calculations and simulations at speeds and scales far beyond the capabilities of ordinary computers. They’re built for tasks that demand massive simultaneous number crunching, such as weather forecasting or simulating atomic behavior.

Unlike typical machines, supercomputers leverage a huge number of central processing units (CPUs)—sometimes tens of thousands—working in parallel, all interconnected by high-speed networks.

Since their inception in the 1960s, supercomputers have been mainly used in government labs and universities across countries like the U.S., China, and Japan.

How Is an AI Supercomputer Different?

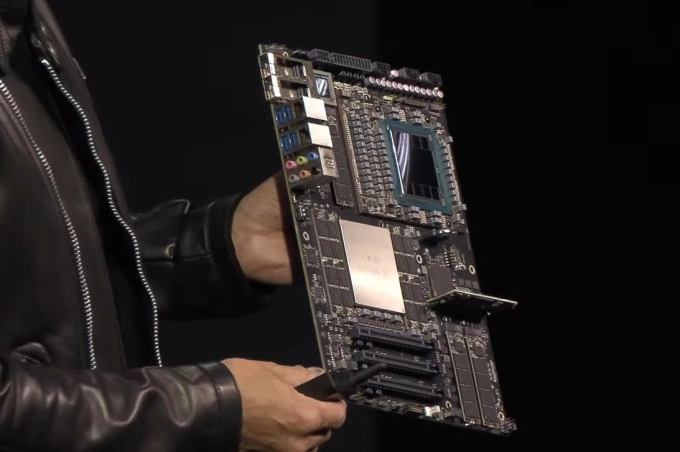

Nvidia’s next-generation system will be built around hundreds of thousands of graphics processing units (GPUs), instead of the traditional CPU-heavy designs. These GPUs are especially effective for training artificial intelligence models, powering applications like ChatGPT and other chatbots.

While classic supercomputers are often built for government research or academic institutions, Nvidia’s primary clients are now major tech companies like Apple and Microsoft, focusing on commercial AI services.

The difference between a regular AI computer and an AI supercomputer is mainly about scale rather than type. High-performance AI servers are typically clustered together into large facilities known as data centers.

Nvidia hasn’t precisely defined when an AI server crosses the threshold into “supercomputer” territory, but according to The Wall Street Journal, the company emphasizes the ultra-high performance of systems packed with its new Blackwell GPUs.

Where Will Nvidia’s AI Supercomputers Be Built?

Nvidia is setting aside more than 1 million square feet (around 93,000 square meters) of facilities in Arizona to manufacture and test its Blackwell chips, and in Texas to assemble its AI servers. The company is collaborating with Foxconn to establish a new factory in Houston and with Wistron for another in Dallas.

Cost sensitivity plays out very differently between smartphones and AI servers. For smartphones like the iPhone, even a $100 price increase can deter buyers, making large-scale U.S. production impractical. In contrast, enterprise customers purchasing AI servers are willing to pay a premium for high-quality, domestically manufactured equipment.

Additionally, iPhone production requires hundreds of thousands of workers for manual assembly, making labor costs a massive factor. AI server assembly, however, is highly automated, with key value lying in engineering, design, and software expertise—areas where the U.S. excels.

Choosing Texas over Silicon Valley also makes strategic sense. Texas is close to Mexico, which has become a hub for AI server manufacturing. About 70% of servers imported into the U.S.—AI and otherwise—come from Mexico, according to Taiwan’s Bureau of Foreign Trade.

Adriana Cruz, Executive Director of Economic Development in the Texas Governor’s Office, pointed out that Texas offers abundant energy resources and a business-friendly environment, making it an attractive destination for tech manufacturing.

Earlier in January, Trump also unveiled the $500 billion Stargate project, aimed at developing AI infrastructure over four years. The initial phase involves setting up data centers in Abilene, Texas. Apple has also announced plans for a new manufacturing plant in the state. Cruz confidently stated that Texas is poised to become the “center of AI infrastructure.”

Still, industry experts caution that while such massive investments sound promising, concrete outcomes will need to be closely watched before drawing conclusions.