The world of artificial intelligence (AI) is evolving rapidly, with each breakthrough capturing the attention of developers and tech enthusiasts. Recently, ChatGPT introduced GPT-OSS, a powerful open-source AI model that could rival the capabilities of GPT-4o. In this article, MiniToolAI explores the features, performance, and real-world applications of this exciting new model.

What Is GPT-OSS? A High-Performance Open-Source AI Model

ChatGPT has officially released two versions of its open-source model: GPT-OSS-120B and GPT-OSS-20B. These large language models (LLMs) feature open weights and are licensed under Apache 2.0, offering exceptional performance at significantly lower operational costs.

Built for efficiency, GPT-OSS is optimized to run on user hardware and outperforms similarly sized open models in complex reasoning tasks.

Highlights

- GPT-OSS-120B: Delivers performance close to OpenAI O4-mini and runs efficiently on a single 80GB GPU.

- GPT-OSS-20B: Matches the performance of OpenAI O3-mini on standard benchmarks and operates on edge devices with just 16GB of memory.

Both models are highly capable in few-shot learning, tool use, and Chain-of-Thought (CoT) reasoning. According to benchmarks like Tau-Bench and HealthBench, GPT-OSS even outperforms OpenAI O1 and GPT-4o in certain scenarios.

Even more impressively, GPT-OSS integrates seamlessly with ChatGPT’s function calling APIs, enabling developers to leverage powerful tools like web search and Python code execution with precision and compliance.

Why GPT-OSS Stands Out: Open, Safe, and Developer-Friendly

A Strong Focus on Safety

GPT-OSS was developed with a high standard of safety in mind. The models underwent rigorous testing, with a specialized version of GPT-OSS-120B fine-tuned under OpenAI’s Preparedness Framework.

Based on internal safety metrics, GPT-OSS matches the security and alignment standards of leading proprietary models from OpenAI, giving developers peace of mind.

Built for Real-World Use

ChatGPT is collaborating with major organizations including AI Sweden, Orange, and Snowflake to bring GPT-OSS into real-world applications. Whether it’s on-premise data hosting, enterprise customization, or domain-specific fine-tuning, GPT-OSS is built for flexibility.

By open-sourcing GPT-OSS, ChatGPT is empowering:

- Individual developers

- Enterprises

- Governments

…to run and tailor AI models directly on their own infrastructure. Combined with the existing API-based models, this gives users complete control over performance, cost, and latency.

GPT-OSS Architecture and Training Methods

GPT-OSS achieves its high performance and cost efficiency through advanced architecture and training techniques. Here’s a breakdown of how this powerful open model was developed:

Pretraining: Optimized for Efficiency

GPT-OSS is the first open-weight model of its kind since GPT-2. It is built using the Mixture of Experts (MoE) transformer architecture, which allows for selective activation of parameters — reducing computation without sacrificing performance.

- GPT-OSS-120B: 117 billion parameters total, but only 5.1 billion activated per input token.

- GPT-OSS-20B: 21 billion total, with just 3.6 billion activated per token.

It also features Rotary Positional Embeddings (RoPE) for positional encoding and supports context lengths of up to 128,000 tokens, ideal for processing long-form documents.

Post-training: Aligning with Human-Like Reasoning

After pretraining, GPT-OSS models undergo fine-tuning using supervised learning and Reinforcement Learning from Human Feedback (RLHF) — similar to the process used for o4-mini.

This helps the models:

- Follow OpenAI’s Model Specification

- Master Chain-of-Thought reasoning

- Provide more accurate, coherent, and aligned responses

Like the o-series models, GPT-OSS supports three reasoning levels: low, medium, and high. Developers can easily specify the desired level in the system prompt, balancing performance and latency to suit different use cases.

Extended Context Window Support

One of the standout features of GPT-OSS is its massive context window of up to 131,072 tokens, allowing the model to process long-form documents, complex conversations, and multi-step reasoning with ease.

In addition, GPT-OSS supports completion outputs up to 32,766 tokens, making it ideal for use cases like:

- Legal and technical document generation

- Book summarization or creation

- Multi-turn conversations in a single request

- Deep contextual understanding in RAG pipelines

This extended capacity places GPT-OSS among the top-tier models in terms of flexibility and real-world application readiness.

Benchmark Results: GPT-OSS Delivers Outstanding Performance

To assess the real-world capabilities of GPT-OSS, OpenAI rigorously evaluated both GPT-OSS-120B and GPT-OSS-20B using widely recognized academic and industry-standard benchmarks. These tests covered a broad range of tasks, including coding, mathematics, medical knowledge, and tool usage.

The models were directly compared with OpenAI’s own inference series, including O3, O3-mini, and O4-mini—and the results are impressive.

GPT-OSS-120B: Competing with the Best

Despite being open-source, GPT-OSS-120B outperformed O3-mini and achieved performance on par with or even exceeding O4-mini across multiple domains:

- Competitive Programming: Exceptional results on Codeforces, a widely respected benchmark for algorithmic problem-solving.

- General Reasoning: High accuracy on MMLU (Massive Multitask Language Understanding) and HLE, testing logical, linguistic, and domain-specific reasoning.

- Tool Use: Demonstrated robust functionality in TauBench, a benchmark designed to evaluate models’ ability to interact with external tools and APIs.

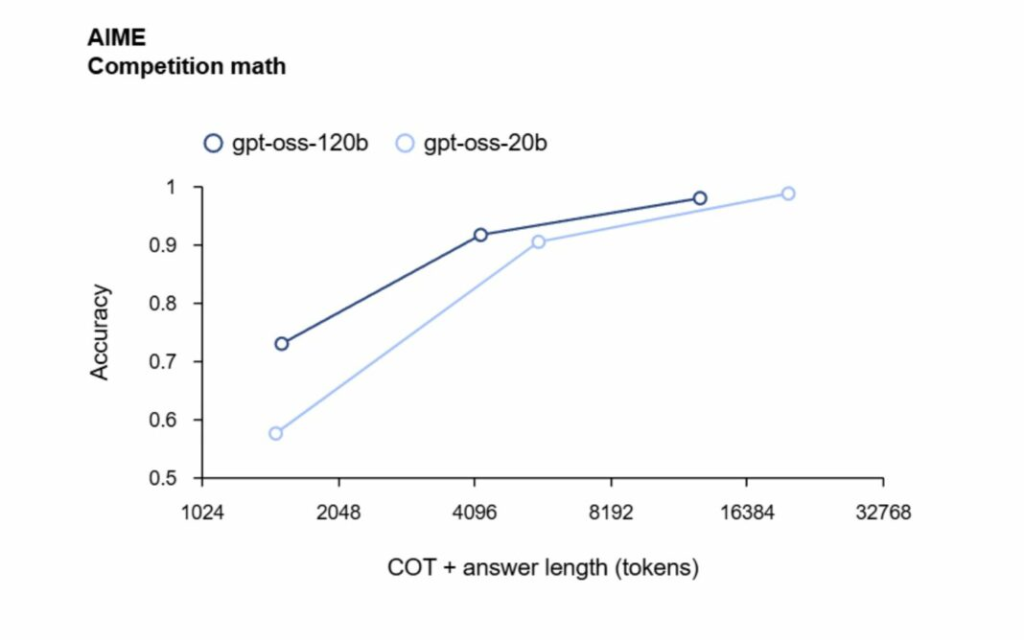

- Medical & Math Evaluations: Strong performance in HealthBench and competitive-level math exams (AIME 2024 & 2025), indicating advanced reasoning in technical and academic fields.

GPT-OSS-20B: Small Size, Big Results

Even with a much smaller size, GPT-OSS-20B proves to be a powerful contender. It consistently matches or exceeds the performance of O3-mini on various benchmarks.

In particular, GPT-OSS-20B excels in:

- Competitive Mathematics: Solving complex math problems with remarkable efficiency.

- Medical Tasks: Offering accurate insights and information in healthcare-related evaluations.

These results reinforce GPT-OSS as one of the most capable open-weight AI models currently available. With exceptional performance across both large and compact configurations, GPT-OSS is not just an open alternative—it’s a top-tier AI tool ready for real-world implementation.

Where to Download GPT-OSS and System Requirements

Interested in trying GPT-OSS yourself? Here’s everything you need to know about where to download the models and what kind of hardware you’ll need to run them efficiently.

Download GPT-OSS Models

Both GPT-OSS models are available for free on Hugging Face, shared by OpenAI:

For the latest updates and documentation, visit the official GitHub repository:

https://github.com/openai/gpt-oss

System Requirements

GPT-OSS models use the MXFP4 quantization format by default, which dramatically reduces memory requirements — but only works on newer GPU architectures.

GPT-OSS-20B

- ~16 GB VRAM (when using MXFP4)

- Ideal for high-end consumer GPUs (e.g., RTX 5090)

- Memory usage increases to ~48 GB if using

bfloat16instead

GPT-OSS-120B

- Requires ≥60 GB VRAM or multi-GPU setup

- Best suited for data center GPUs like NVIDIA H100 or GB200

Note: MXFP4 is only supported on Hopper or newer architectures, including:

- NVIDIA H100, GB200

- RTX 50-series (e.g., RTX 5090)

Try GPT-OSS Online for Free

Don’t have the hardware to run GPT-OSS locally? No problem!

You can try GPT-OSS directly in your browser, completely free, through MiniToolAI: GPT-OSS Free Online

No installation. No GPU required. Just open the link and explore the power of GPT-OSS in real-time!

Final Thoughts: Open-Source AI Just Got a Major Upgrade

In this article, we introduced you to GPT-OSS – the groundbreaking open-source language model series released by ChatGPT. From performance benchmarks that rival GPT-4o to real-world readiness across coding, reasoning, healthcare, and tool usage, GPT-OSS proves that open models can now match (and even exceed) proprietary ones.

Here’s a quick recap of what makes GPT-OSS a game-changer:

- ✅ Two powerful models: GPT-OSS-120B and GPT-OSS-20B

- ✅ Free to use under Apache 2.0 license

- ✅ State-of-the-art reasoning, tool use, and Chain-of-Thought capabilities

- ✅ Optimized for both consumer GPUs and enterprise infrastructure

- ✅ Fully compatible with ChatGPT’s function calling API

- ✅ Accessible online for free via MiniToolAI’s web interface

Whether you’re a developer, a researcher, or a tech enthusiast, GPT-OSS opens the door to high-performance AI without the limitations of closed ecosystems.

Ready to explore the next generation of open AI?

Start building, experimenting, and innovating — GPT-OSS is here, and it’s free for everyone.

Source: OpenAI