Over the past few years, machine learning has become one of the hottest topics in tech, often mentioned alongside artificial intelligence (AI). Today, it’s being applied widely across industries, from healthcare and finance to robotics and marketing. In this article, we’ll break down what machine learning actually is, go over its core concepts, and explore why it has become such a powerful and widely adopted technology.

Note before we begin: I’m still relatively new to machine learning, so this article may have some inaccuracies. If you spot anything that doesn’t look quite right, feel free to share your thoughts so I can improve it!

So, What Exactly Is Machine Learning?

If you’ve ever searched for “what is machine learning,” you’ve probably come across dozens of different definitions. After doing my own research, here’s a simple summary:

Machine learning (ML) is a subfield of artificial intelligence (AI) focused on building systems that can learn and improve from experience or data—without being explicitly programmed for specific tasks. Essentially, machines use algorithms to identify patterns in data, make predictions, and even adapt their behavior based on what they’ve “learned.”

Machine learning problems usually fall into two major categories:

- Prediction tasks, like estimating housing prices or forecasting sales.

- Classification tasks, such as recognizing handwritten text or identifying objects in images.

The Machine Learning Workflow

To understand how machine learning works in practice, let’s walk through a typical workflow:

- Data Collection

Every ML project starts with data. You need a dataset for the model to learn from—this can be gathered manually or sourced from publicly available datasets. It’s important to use reliable, high-quality data to ensure better model performance. - Preprocessing

Raw data often contains noise or irrelevant features. In this step, you clean and format the data—this includes normalization, labeling, encoding features, and sometimes dimensionality reduction. Surprisingly, data preparation can consume over 70% of the total project time! - Training the Model

This is where the machine learns. Using the processed data, you train the model so it can find patterns and relationships in the data. - Evaluating the Model

After training, the model’s accuracy is assessed using various performance metrics. A model that achieves over 80% accuracy is generally considered reliable, though this depends on the specific use case. - Improvement & Optimization

If the model’s performance isn’t up to expectations, it might need fine-tuning or retraining with different parameters or additional data. This cycle may repeat several times.

Main Types of Machine Learning

Machine learning can be classified in several ways, but the most common breakdown is:

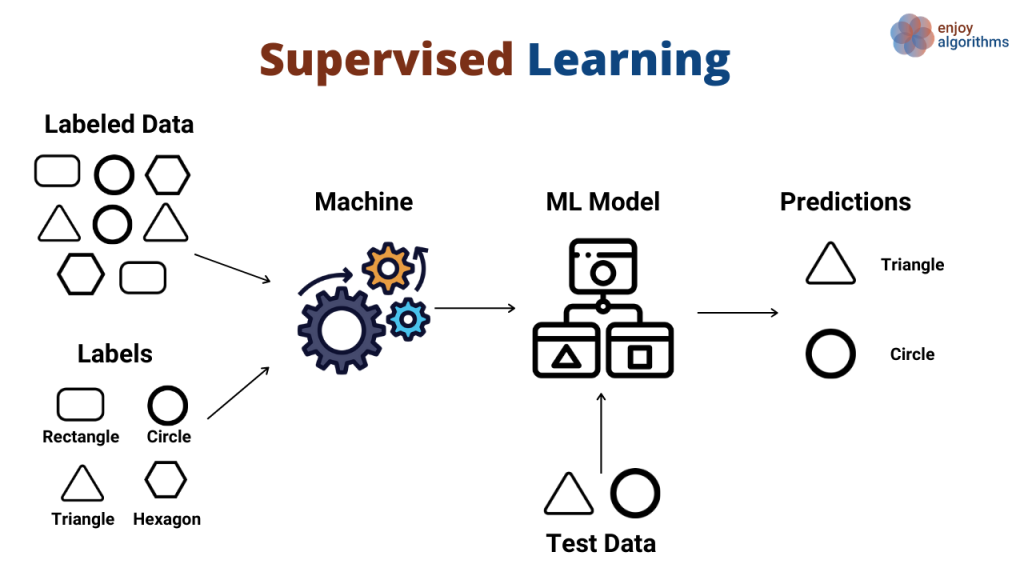

- Supervised Learning: Learning from labeled data. Each input (X) comes with an associated output (Y), which guides the model during training.

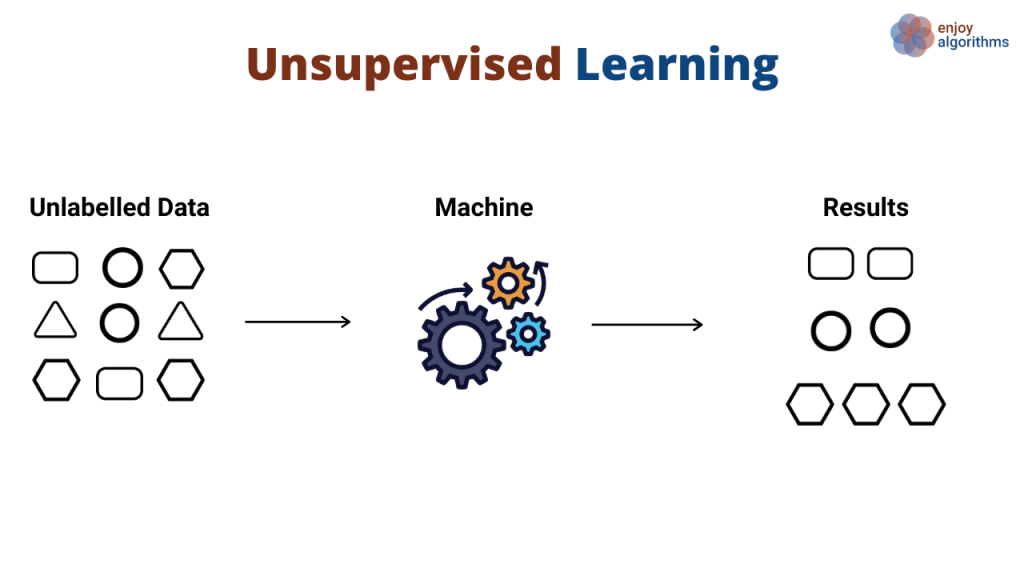

- Unsupervised Learning: Learning from unlabeled data. The model tries to find patterns or groupings (clustering) without predefined labels.

Other types you may come across include:

- Semi-supervised Learning: Combines a small amount of labeled data with a larger set of unlabeled data.

- Reinforcement Learning: A reward-based system where the model learns through trial and error.

- Deep Learning: A specialized subset involving neural networks that can model complex structures.

Key Terms and Concepts

Here are a few important terms you’ll encounter in machine learning:

- Dataset: A collection of raw data used for training and testing. Think of it as a spreadsheet with many rows and columns.

- Data Point: A single entry in the dataset, often representing one observation or instance.

- Training Data vs. Test Data: The dataset is usually split into two sets. The training set is used to teach the model, while the test set is used to evaluate how well it performs on new, unseen data (commonly split 80/20).

- Feature Vector: A numerical representation of a data point, with each dimension corresponding to a specific attribute or feature.

- Model: The trained machine learning algorithm that can make predictions or decisions based on what it has learned from the training data.

Real-World Applications of Machine Learning

Machine learning systems are now deeply embedded in our daily lives, forming a backbone of the modern internet. They power product recommendations on Amazon, suggest what to watch next on Netflix, and personalize just about every digital experience.

Every Google search relies on machine learning to interpret user queries and tailor results to individual preferences. Likewise, Gmail’s spam and phishing filters use sophisticated ML models to keep your inbox safe from unwanted or malicious messages.

Perhaps the most recognizable use of machine learning is in virtual assistants like Siri, Alexa, Google Assistant, and Cortana. These tools depend heavily on ML for speech recognition, natural language understanding, and even scanning the internet to answer questions on the fly.

But those are just the most visible applications. Behind the scenes, machine learning plays a critical role across nearly every industry:

- Computer vision in autonomous vehicles, drones, and delivery robots

- Conversational AI in chatbots and voice assistants

- Facial recognition in surveillance systems, particularly in countries like China

- Medical imaging tools that detect tumors or analyze genetic sequences linked to disease

- Predictive maintenance for smart infrastructure and IoT systems

- Enabling cashier-less retail experiences

- Real-time translation and transcription for meetings

In 2020, OpenAI’s GPT-3 stunned the world with its human-like writing abilities. Trained on billions of English-language web pages, GPT-3 could generate coherent text and provide insightful responses on virtually any topic.

Try the latest GPT model for free: minitoolai.com/chatGPT/

Looking ahead, machine learning may pave the way for robots that learn simply by watching humans. In fact, researchers at Nvidia are already developing deep learning systems that teach robots to perform tasks through human demonstration.

ML has made its way into almost every sector:

- Finance & Banking

- Healthcare & Biology

- Agriculture

- Information Retrieval

- Automation & Robotics

- Chemistry

- Computer Networking

- Space Science

- Advertising & Marketing

- Natural Language Processing

- Computer Vision

A classic example is weather prediction. Traditionally, this involves using mathematical formulas and past observations. But as data scales into the millions or billions of entries, humans simply can’t handle that volume efficiently. ML models, on the other hand, can quickly learn from massive datasets and make highly accurate forecasts.

In situations where human calculation falls short due to scale or complexity, machine learning steps in as a powerful alternative.

How Are Machine Learning and Deep Learning Different?

Machine Learning (ML) refers to the process where humans train computers to improve at performing specific tasks through experience. Simply put, it’s about creating systems that get better at a task the more they perform it, without being explicitly programmed for every scenario.

Deep Learning (DL), on the other hand, is a specialized subset of machine learning that relies on artificial neural networks—systems inspired by the way the human brain works. These networks are capable of automatically learning features from vast amounts of data without needing human-designed rules.

Unlike traditional machine learning, deep learning demands significantly more data and computational power. For example, instead of manually teaching a system what a cat looks like, deep learning models can be trained by feeding them thousands—or even millions—of cat images, allowing them to learn and recognize patterns on their own.

Key Differences Between Machine Learning and Deep Learning

Human Involvement

One of the primary distinctions lies in how much human intervention is required. Machine learning typically needs human input to structure data, select features, and tweak algorithms to improve accuracy. Deep learning models, by contrast, can learn directly from raw data with minimal human guidance, thanks to their layered network structure.

Hardware Requirements

ML algorithms are generally simpler and can be run on standard computers. DL models, due to their complexity and the volume of data they process, often require high-performance GPUs or specialized hardware to function efficiently.

Time to Train and Operate

Machine learning systems are usually quicker to build and deploy but may take longer to deliver results due to their reliance on human optimization. Deep learning models take more time to train—especially during the setup phase—but once trained, they can deliver fast and highly accurate outputs.

Data Requirements

Deep learning thrives on big data. While traditional ML might work well with thousands of data points, DL models often need millions to perform effectively. This makes DL ideal for tasks where large datasets are available and nuance is essential.

Real-World Applications

Both technologies are widely used today. ML powers many everyday tools like email spam filters, fraud detection in banking, and diagnostic aids in healthcare. DL enables more complex applications such as autonomous vehicles, voice assistants, and surgical robots—systems that require deep understanding and real-time decision-making.

Final Thoughts

Hopefully, this article gave you a clear, beginner-friendly overview of what machine learning is, how it works, and why it’s so important today. If you found it helpful or have suggestions for improvement, feel free to leave a comment—I’d love your feedback!

Thanks for reading!